DeepSeek V4 Architecture Explained: Solving the 1M Token Context Bottleneck

Published on May 3, 2026 • 8 min read

Building large language models with massive context windows usually leads to one inevitable outcome: hardware failure. The Key-Value (KV) cache required to maintain a 1-million-token context window typically demands gigabytes of VRAM per sequence, crippling inference speeds and pricing out everyone except mega-corporations with infinite compute budgets.

DeepSeek V4 has completely shattered this paradigm. Built by a lean team with severely constrained compute resources, the 1.6 Trillion parameter Mixture-of-Experts (MoE) model manages to rival closed-source giants like GPT-4 and Claude 3.5 Opus. More impressively, it achieves this while reducing the required KV cache footprint by up to 90% and slashing single-token FLOPs by nearly 4x compared to its predecessor. Here is a technical breakdown of the architectural breakthroughs that made this possible.

The 1M Token Problem: Why Standard Attention Fails

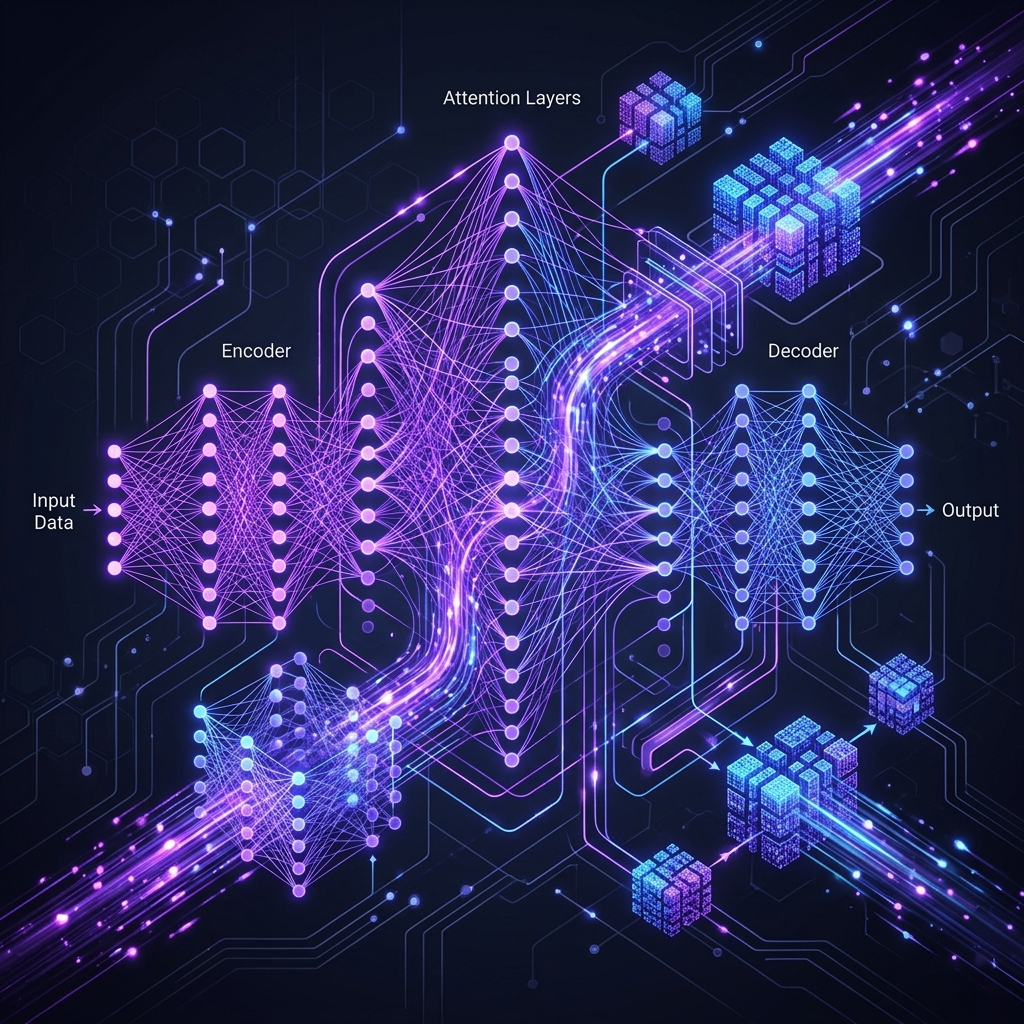

In a standard Transformer architecture, as introduced in the seminal Attention is All You Need paper, every new token must be mathematically compared against every previous token in the sequence. At 1,000 tokens, this is computationally trivial. At 1,000,000 tokens, the number of comparisons becomes astronomical.

To avoid recalculating the entire sequence for every new word, models store the intermediate mathematical states in a running memory known as the KV Cache. For a 1M token prompt, this lookup table becomes so massive that the GPUs spend more time moving data across network cables between server racks than they do actually calculating the next word. This interconnect bottleneck forces the system to stall.

The Solution: Hybrid Attention Architecture

Instead of throwing brute-force compute at the problem, DeepSeek V4 implements a highly optimized "Hybrid Attention" system. It treats past tokens differently based on their relevance, splitting the workload across three distinct pathways:

- Heavily Compressed Attention (HCA): This pathway aggressively compresses massive chunks of past context (e.g., 128 tokens into a single dense representation). It acts as the model's broad, high-level understanding of the entire document.

- Compressed Sparse Attention (CSA): Instead of scanning the entire history, CSA uses a fast internal search engine called the Lightning Indexer. It rapidly scores compressed blocks of tokens and only retrieves the small subset that is directly mathematically relevant to the current query.

- Sliding Window Attention (SWA): The model keeps a small, sliding window of the most recent tokens (e.g., the last 128 words) completely uncompressed. This guarantees perfect, exact fidelity for immediate context.

By interleaving these three strategies layer-by-layer, DeepSeek V4 achieves a 1M token context window while using only 10% of the KV cache memory required by traditional architectures.

Taming Signal Explosions with mHC

Standard neural networks rely on residual connections (skip connections) to pass data through hundreds of layers without losing the original signal. However, in a 1.6T parameter model, these bypass lanes often cause the mathematical signal to amplify uncontrollably, leading to training crashes (signal explosions).

To prevent this, the DeepSeek team engineered Manifold-Constrained Hyper-Connections (mHC). Rather than allowing the residual signal to flow freely, mHC forces the data matrix to behave as a doubly stochastic matrix (where every row and column sums exactly to 1). By utilizing the Sinkhorn-Knopp algorithm, the model normalizes the signal iteratively across the layers. The mathematical constraints ensure that the total signal is always perfectly conserved—it can never mathematically explode, guaranteeing stable training runs even at extreme scales.

The Muon Optimizer and Z3 SMT Solvers

DeepSeek V4 also abandons the industry-standard AdamW optimizer. Instead, they utilize a custom optimizer called Muon. Muon operates in two phases: it aggressively pushes the network toward convergence with sweeping adjustments, and then applies subtle, precise tweaks to stabilize the learning rate. This two-phase approach resulted in noticeably faster training times.

Furthermore, to optimize GPU memory, the team wrote custom fused kernels (merging multiple operations into single GPU commands) using a proprietary language called TileLang. Because hand-writing fused kernels is incredibly error-prone, they utilized the Z3 SMT solver. This allowed them to formally and mathematically prove that their kernel code was flawless before deploying it, completely eliminating silent training corruption.

Support the Author

If you found this technical breakdown helpful, please consider supporting our work by trying out our free software utility.

Try Instabatch - The Universal Media DownloaderFrequently Asked Questions

What is the difference between DeepSeek V4 Pro and V4 Flash?

DeepSeek V4 Pro is the flagship 1.6 Trillion parameter MoE model (with 49B active parameters during inference), designed for complex coding and reasoning. V4 Flash is a distilled, hyper-efficient version with 284 Billion total parameters (13B active), designed for instant response times and massive throughput while retaining the 1M token context window.

How does Compressed Sparse Attention (CSA) save VRAM?

Instead of keeping every past token in active GPU memory (KV Cache), CSA compresses chunks of older tokens into dense representations. When the model needs to recall information, a Lightning Indexer rapidly scores these blocks and only loads the mathematically relevant chunks into active memory, reducing VRAM footprint by nearly 90%.

Why is standard residual connection scaling unstable?

In massive networks, standard skip connections can cause the variance of the signal to amplify uncontrollably layer by layer, leading to loss divergence (a training crash). DeepSeek solved this using Manifold-Constrained Hyper-Connections (mHC) to forcefully normalize the signal.